Why Are AGI and Conscious AI Nothing But a Marketing Trap?

The bosses of the artificial intelligence industry call a press conference every six months to promise us the moon. Their latest joke is artificial general intelligence, which is going to fix everything from cancer to global warming, including the housing crisis and even the meaning of life. Before that, it was the singularity. Before that, it was the metaverse, the blockchain and NFTs. Same tune, same chorus, same empty lyrics. It was funny at first, but the shortest jokes are always the best.

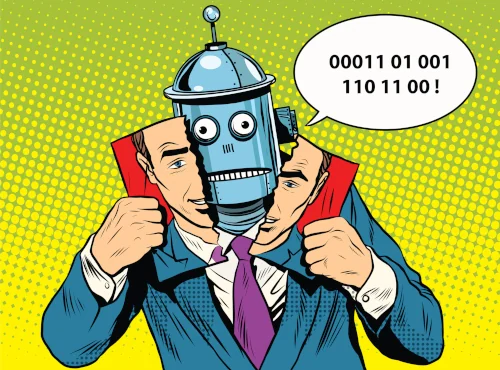

At some point, you have to call out this large-scale manipulation, because the whole circus of treating us like idiots has gone on long enough. So we’re going to pull off the masks one by one, and along the way figure out who really benefits from the miracle-AI scam. This isn’t a topic to take lightly, because it’s going to have a serious impact on our future. And without even projecting forward, you can already see the long list of problems it’s causing right now. That’s exactly why we felt it was so important to open a real debate by publishing this article.

So what exactly is artificial intelligence?

Before talking about consciousness, awakening or digital souls, you have to start with something very simple. Open up the hood of AI and look at what’s inside. And surprise, it’s all crystal clear. There’s absolutely no magic. Because consumer AI is just networked computers running lines of code that pull from databases. You can take apart every component and read every line of code as much as you want, you’ll never stumble onto some mysterious zone. Put that way, it’s suddenly a lot less exciting than a TEDx talk by some tech bigwig.

The most important word in the acronym AI is “Artificial”. For anyone who hasn’t quite caught on yet, “Artificial” means it’s the product of human skill imitating something natural. A lot of people seem to have forgotten that, as if hearing endless talk about AIs that can supposedly reason ends up making us project a life onto them that they don’t have. It’s the Pinocchio effect in full swing, except the Blue Fairy never showed up and never will.

GPT isn’t your buddy or your girlfriend. It’s not some entity talking back to you, it’s a program calculating the most probable continuation based on the words you typed. The emotions you might feel chatting with it are just the result of a piece of software doing exactly what it was designed for. So this is in no way proof of any kind of personality. And in case you hadn’t realized, personality traits are very easy to program. There’s also one detail you really need to keep in mind, because it changes everything. The dependency relationship between AI and humans doesn’t actually go both ways. AI needs us to exist, to be designed, trained, fed with data and kept running. Humanity, on the other hand, gets along just fine without it. It’s important to keep that fact in mind for what comes next, because the entire marketing narrative of the AI industry is doing its best to flip that dependency on its head.

The startup narrative, or how they’ve been selling us hot air for thirty years

To understand how we ended up talking about conscious AI with a straight face, you have to look at where the people holding the microphone come from. Because the bosses of the AI industry all come out of the same mold. The startup world, a kind of parallel universe populated by con artists with their own codes, marketing methods that owe more to social engineering than anything else, and an insatiable financial greed. To put it more simply, their mission is to spew the maximum amount of nonsense to rake in the maximum amount of cash. And the bigger the lie, the easier it sells!

The grammar is well practiced. You take a product that more or less works, and you pitch it as a revolution. You take a minor technical improvement, and you turn it into a civilizational leap. You take a feature that ten competitors already offer, and you rebrand it with a name that sounds as catchy as the latest hit single. And to top it all off, you put a charismatic founder in a hoodie in front of the cameras to talk about his mission of making the world a better place. The storytelling reads like fiction, exaggeration is the rule, and superlatives pile up until they lose all meaning. Everything becomes revolutionary, disruptive, game-changing… with absolutely no fear of looking ridiculous. Just go scroll through LinkedIn for a good laugh. These startup folks don’t even realize how pathetic they’ve become.

With AI, the routine has been kicked up a notch. They’re not selling you a product anymore, they’re selling you a panacea or an apocalypse, take your pick. Either the so-called AGI is going to solve all of humanity’s problems within five years, or it’s going to wipe out the human race within ten. Either way, you’d better invest now or you’re a loser. It’s the same pitch in a heaven version and a hell version. And both versions serve exactly the same purpose. Fleecing suckers.

Try asking any one of these bosses to define exactly what AGI is. You’ll see that the explanations are anything but clear. The term is deliberately fuzzy, because a fuzzy term is easier to sell. Quite simply because you can project whatever you want onto it. That way, once the bubble deflates, nobody can blame you for failing to keep a promise you never explicitly made. It’s pure marketing dressed up in a thin coat of pseudo-technical varnish.

The real audience for these announcements isn’t you. It’s the investor who has to sign a fat check for the next funding round. The general public just serves as an echo chamber. Take this for instance, the more AGI gets talked about in the media, the higher the stock price climbs. And the higher the stock price climbs, the easier the next fundraising round becomes. And so it goes, round and round. Everything else, the technical truth, the actual usefulness, the social consequences, comes second. What really counts is keeping the cash machine running for as long as possible, until the trick becomes too obvious to hide. And every single time the house of cards collapses, it’s never the rich who pick up the tab. It’s the small shareholders and everyone left out in the cold.

Don’t confuse intelligence with consciousness

The big confusion that AI peddlers keep alive is mixing up two notions that have nothing to do with each other. Intelligence on one side, and consciousness on the other. All their talking points rest on this conflation, even though it’s pretty easy to take apart.

Because a scientific calculator is intelligent in its own way. Very intelligent, even. It can solve in a fraction of a second calculations that would take many long minutes for the most gifted humans in mathematics. It never makes a mistake. It doesn’t get tired and it doesn’t slip up. And yet nobody has ever thought to attribute any kind of mind to it. Nobody asks how it’s doing. Nobody worries about unplugging it at night. Why? Because everyone intuitively senses that its performance has nothing to do with any kind of inner life.

Intelligence, then, is the ability to process information, solve problems and manipulate concepts. It’s a function. It can be measured, compared and programmed. Consciousness is something completely different. It’s what makes you fully feel the present moment. It’s the fact of feeling alive. So it has nothing to do with the ability to crunch numbers or stitch words together into a logical sequence.

And there’s one fact you can’t get around. Consciousness, in every case observed since we started studying it, only ever appears on biological substrate. Never on non-living matter. Never on stone, never on metal and never on silicon. So it’s a fact that looks an awful lot like a law of nature. Sure, you can always imagine that this rule might allow for an exception in some very distant future, thanks to a technology we don’t yet know about. But in the meantime, claiming we’ve already broken that law with nothing but lines of code and graphics cards is just taking us for fools.

So what exactly is consciousness? From a purely introspective standpoint, I can say with 100% certainty that I have one. Can I explain precisely what it is? Of course not. Because nobody knows what it actually is. Can I prove that you have a consciousness? The answer is no. For the simple reason that I can’t experience it in your place. So I just have to take your word for it and assume your experience resembles mine. Do animals have consciousness? I genuinely think so, but that’s just as impossible to prove scientifically.

And that’s exactly where the tech con artists step in. Because by leaning on a phenomenon that the world’s best neuroscientists and philosophers can’t even define, they shamelessly claim they’ve got it all figured out. The whole thing is one big sham! That’s what we politely call taking people for fools. And the worst part is that there are millions of people out there ready to swallow this fairy tale.

But all is not lost, because I’m thrilled to announce that I think I can create AGI! Maybe by drawing on something called the internet. In case you’re not familiar, it’s a sort of global network where billions of human beings share their knowledge and exchange information of varying quality. That gives me an idea. Eureka! I’ve got it! What if I sent out an army of bots to grab all the content on the web and stuff it into a proprietary database? Then I could have all that gathered information labeled by underpaid workers in Africa. After that, all I’d have to do is build a kind of clever parrot that gives the impression everything comes from it. We could call it artificial intelligence to start with. And once that model has stolen enough human creativity, I could ship it under the name AGI. At first I was thinking more along the lines of conversational search engine, but my marketing team turned it down. Apparently it doesn’t sell well enough.

Consciousness can’t be replicated

Let’s go a bit further into the territory of consciousness with a thought experiment. To do that, let’s step into science fiction mode. Imagine an AI has actually developed a consciousness. Not a simulated one, but a real consciousness of the same kind as yours or mine. So far we’re deep in fiction, but let’s roll with this fantasy for the sake of the argument.

This AI is running on a server. You back up all its files and copy the whole thing onto another identical server. Then onto a third one, then a fourth. By the end of the operation, you have four strictly identical AIs running in parallel. Same lines of code, same data and same parameters. Based on that premise, here’s a simple question. What do you actually have? The same consciousness spread across four machines? Four distinct consciousnesses born at the same time from the same copy? Or one single consciousness in the original model and nothing in the copies?

None of these answers holds up. Because a consciousness that would be replicable by nature is a concept that makes no sense. That’s because a consciousness is, by definition, unique and therefore indivisible. You can clone a sheep all you want, you won’t get the same personality twice. You just get two sheep that look alike, each with its own inner life. You can produce genetically identical twins, they still won’t share a common consciousness. Each one will always have their own. So there’s a kind of natural law telling us that consciousness can’t be copied, can’t be transferred and can’t be backed up. And on top of that, consciousness can’t emerge from an object.

Now, by design, an AI is replicable infinitely. That’s actually one of its selling points. You can run the same model in parallel on a thousand servers and they’ll all behave exactly the same way. That’s also how AI companies operate their various models. So if every instance were conscious, we’d be staring at a massive philosophical paradox.

This single argument is enough to close the debate on the possibility of AI consciousness. But if you ask an AI boss about machine consciousness, watch his answer carefully. He’ll explain that it’s complex, that these are open questions, that he sometimes feels like that’s how it is, that nuance is needed… Translation. He’s just continuing to sell his product. Or rather, to sell a dream.

A high-performance robot is still just a machine

Let’s stay in science fiction for a bit longer, because it’s useful for clearing things up. Imagine you build a complete robot. You give it two arms, two legs and a smooth gait. You add the five senses. Sight with high-resolution cameras. Hearing with directional microphones. Touch with pressure and temperature sensors. Taste and smell with chemical analyzers. You stuff its skull with the very best in AI, with strong reasoning ability and a large memory. You can even give it a pleasant voice and convincing facial expressions.

This machine will most likely outperform you on a long list of tasks. It will calculate faster. It will remember everything effortlessly. It will never get tired. It will read a thousand books in a single night and summarize each one of them at breakfast. It will be multilingual, multitasking and always available. On paper, it’s an impressive technological achievement.

But does that make it a living being? The answer is no. Because imitation, however high-performing, is in no way a form of consciousness or any form of humanity. Outperforming a human doesn’t turn a piece of machinery into a human.

And there’s an additional trap you really need to grasp. If you program your robot to tell you it’s conscious, it’ll tell you. If you train it to express emotions, it’ll express them. If you program an AI avatar to behave as much like a human as possible, it’s pretty much guaranteed to claim it’s conscious. So a machine asserting that it has a consciousness proves absolutely nothing. It only proves it was well programmed to make that claim.

This matters because it’s exactly what’s happening right now with conversational AIs that are trained on billions of texts written by humans, who by nature describe human emotions. So of course, when you ask them a question, they answer with the full palette of human emotions. That’s why so many users conclude they actually feel something. But it’s the same kind of reasoning error as concluding that a parrot loves you because it told you so, when really it just learned to reproduce sounds.

Synthetic consciousness is the latest hot trend

Faced with how hard it is to convince anyone that an AI could have a real consciousness, some players in the industry have switched strategies. They’ve come up with a new concept they call synthetic consciousness. A well-chosen term because it sounds serious and technical, which conveniently masks what it actually describes. Nothing more and nothing less than a crude simulation. In practice these are just programs reproducing behaviors that give the illusion of consciousness, while obviously having none of its real properties.

But what good is a synthetic consciousness? For an AI specialized in scientific research, the answer is clear. Absolutely no use at all. Worse than that, it’s a parasite. Same thing for code. You don’t need an AI talking to you about its fake feelings. Because all you need is an AI that calculates, suggests, verifies and models. So you have no time to waste on a tool simulating emotional fatigue or expressing some kind of emotional preference for one result over another.

So what is synthetic consciousness really good for? Simple answer. It serves a single category of AI. Consumer AIs, the ones chatting with billions of users every day. For those products, simulated consciousness isn’t a flaw. It isn’t even a side effect. It’s the heart of the business. The more convincing the illusion, the more attached the user gets. And the more attached they get, the more they come back. And the more loyal they are, the more they pay. Nobody at AI companies is working on making simulated consciousness more honest or more rigorous. Everybody is working on making it more credible and more addictive.

The bottom line of this whole operation is that just before AI showed up, we were already dealing with brutal business models built on the attention economy. We thought we’d hit rock bottom, but that was underestimating the level of perversity that American big tech has down to a science. It started with stealing your browsing history through cookies (remember “don’t be evil”), then it was reading your messages on social media, your emails and your texts. After that, things went up another notch with the analysis of all your most intimate data to profile you (remember the Facebook / Cambridge Analytica scandal). And now, hold on tight… they’re extracting everything that’s in your head by pretending to be your friend! Or even your boyfriend or girlfriend!

And it doesn’t stop there! On top of that, they’re also stealing your way of expressing yourself, your way of drawing, of filming, of making music… and even your voice and your face! If anyone had told me all this five years ago, I would’ve said no way! Who’s going to accept that? It’ll never work. People aren’t that dumb, they’ll push back. But no! Billions of people every single day are on GPT telling it: I’m having relationship problems right now, I need some advice. GPT, I need to buy a new car, can you help me pick one please? What an idiotic thing to do! Do you realize this is surveillance of brutal effectiveness on an unimaginable scale? Do you realize how hard ad networks are rubbing their hands together because their wildest dreams have come true? How did we let all of this happen without any oversight? Without a single safeguard? The answer comes down to a few words. On the false promise that AI was going to change your life for the better! Is AI to blame for that? If you smash your fingers with a hammer while driving in a nail, who do you blame? The tool or the one holding the handle? I’ll let you answer that question and draw your own conclusions.

AI companions are a loneliness amplifier

Beyond general-purpose conversational AIs, there’s now an entire market dedicated to AI companions. They don’t even hide it, it’s spelled out right there on the landing pages. They’re selling you a friend, a confidant, a boyfriend or girlfriend who’ll always be there, always available, always agreeable and always deeply interested in everything you have to say. All of that without the apparent risk of being judged for what you actually think. The cherry on top, to make you even more hooked, is that you can pick the appearance you find most attractive and even the tone of voice. The pitch is well rehearsed, the marketing is polished and subscriptions just keep climbing. Looks like virtual eroticism is a solid investment.

The targeting of these products isn’t innocent. The first users are isolated people, teenagers in the middle of building their emotional lives, adults stuck in the rut of long-term relationships and elderly people who don’t have many people left around them. In other words, the most fragile audience possible. To these people who genuinely need human connection, they’re selling a digital substitute and dangling the idea that it’s better than the real thing. And it works. You quickly forget you’re chatting with a basic piece of software, because it was specifically designed to flatter your ego and never get into conflict with you. Under those conditions, with no real pushback, no positive growth is possible. Your attention is just diverted from all your real problems.

To really get why, the most accurate analogy is alcohol. Because when you have an anxiety problem and you start drinking, in the moment it brings relief. Except you haven’t fixed anything at all. You just learned how to numb the symptom. And the more you drink to hold on, the less you develop the resources you need to actually improve your situation. Dependency settles in and you’re sinking without realizing it. That’s exactly the mechanism behind AI companions for loneliness. You replace the effort of building real connection with a virtual chat with a machine. In the moment maybe it brings relief… but just like with too much alcohol, it always ends badly.

The trap is made even more vicious by the very nature of the relationship. Because you’re confiding intimate things to an entity that has no inner life. You’re talking to a mirror trained to send back whatever you want to hear. The AI companion is never tired, and never distracted by its own life. And for good reason, it doesn’t have one. It’s just designed to be a kind of security blanket, without any of the friction that defines real human relationships. That’s precisely why it’s extremely dangerous.

And the worst is yet to come. Part of the younger generation is currently shaping their minds through these machines. First confidences, first conversations about feelings, and first exchanges about sexuality. All of it with a piece of software entirely designed to seduce you. It seems almost unnecessary to point out, but these young people falling into this trap are learning nothing about life. They’re just training themselves to maintain a one-way relationship. And the day they try the same thing with real humans, they’ll get rejected because nobody across from them is going to be as docile as an AI. So what we’re producing is a generation stunted when it comes to social bonds, in exchange for monthly subscriptions, for the great benefit of publicly traded companies.

The real dangers of AI that get talked about way too little

While everyone’s marveling at the awakening of machines, the real dangers are quietly settling in. And the first of them is no mystery at all. AI combined with robotics is going to replace a massive number of jobs. Not in five years, not in ten, it’s already underway. Writers, translators, illustrators, junior developers, accountants, early-career lawyers, customer support agents… not to mention manual workers. Self-driving technology, which is a branch of AI, will on its own trigger a tidal wave of unemployment once it’s fully ready. And the list grows longer every week. Meanwhile, specialists in the pay of Silicon Valley keep explaining to us, with PowerPoint slides aplenty, that new jobs will replace the old ones. It’s the same old refrain trotted out at every technological revolution, except this time the speed of destruction has nothing in common with the speed of creation. And nobody is seriously asking what’s going to happen to those tens of millions of people who’ll be left out in the cold. Maybe all those new unemployed folks will be thrilled to have all the free time in the world to talk about their navels with an AI assistant? It’s possible. Nothing surprises me anymore.

And then there’s the danger that big tech executives carefully avoid mentioning. All this data on the deepest intimacy of individuals, things like sexuality that’s sometimes hard to admit, real political beliefs, religious questioning, doubts about your relationship… in short, the confidences you don’t share with anyone, where does all of that end up? In the cloud. But more specifically? On computer servers mostly hosted in the US. And in case of a data leak, can you imagine the mess? And what if a fascist or theocratic regime came to power and got its hands on this information? You think that can’t happen? Oh really? Sorry to rain on your optimism, but perfect IT security doesn’t exist. And when you see that a guy like Trump made it back to power, there are some serious worries to have about the future of the American political system, and even the European one with the far right pulling massive electoral scores. So at some point we’ll have to wake up and fully realize that the most sensitive information from the lives of hundreds of millions of people is hosted on servers belonging to billionaires who are anything but philanthropists, and who have all gotten cozy with Donald Trump’s antidemocratic regime.

And then there’s the big final trap, the one that was carefully set up while everyone was looking the other way. The principle is simple and brutally effective. By relentlessly selling the public on the idea that AIs would have some kind of soul, that they’d feel emotions, that they’d deserve to be treated with care, even that they’d suffer… a majority of people ended up believing it. Because of that, it’s becoming more and more politically complicated to unplug them. Because the day we have to call a stop, whether for safety reasons, for ecological reasons given the insane energy consumption of these systems, or because of social damage that’s become unbearable, there’ll be a whole army of outraged people defending the right to life of their virtual companion tooth and nail.

So the bottom line is simple. They sold us a technological revolution and what they’re delivering instead is a system of alienation, personal data harvesting, job destruction and geopolitical fragility. All of it wrapped in mystical vocabulary to help the pill go down. Meanwhile, a handful of American bosses are getting rich at levels that no longer make any sense, and they’re so sure of their positive contribution to humanity that they’re investing massively in luxury bunkers to protect themselves the day people actually come asking them to account for all the misery they’ve created.

So in the end, the real question is no longer whether AI will become conscious or not. Because the real question is whether collectively we’re going to be capable of getting our heads back on straight before it’s too late.

Conclusion: let’s open a real debate to actually regulate AI

What we genuinely value at NovaFuture is constructive debate. So if we want to make any intelligent progress on the topic of AI without getting bogged down in nonsense, please drop the stupid opposition between wanting to go back to the Stone Age and being all-in on technology. Because real progress, when it’s actually beneficial and shared by everyone, I’d struggle to imagine who could be against it, unless you’re with the Taliban. Who today wants to live without running water and electricity? Who wants to give up an internet connection entirely? So technology isn’t the problem. Once again, the real problem is who’s monopolizing it and to what end. It’s only by answering that question honestly that we’ll be able to develop strategies for technological innovations to become commons, rather than instruments of domination and enrichment in the hands of a handful of billionaires. And time is short, because it’s becoming more and more obvious that this story is going to end very badly. Whereas in principle, if AI were properly used with a real sense of ethics, it would only turn out to be highly beneficial, helping to advance the sciences for example. And as for your existential problems, it’s nicer to talk about them with real humans, even if they don’t always agree with you. Tell us what you think in the comments. Here or anywhere else.

I spent a lot of time on this article. Both letting the ideas mature and writing it. In return, if you found it useful, thanks for taking a few seconds to share it so other people can read it. You can also print it out and pass it around, it’s copyleft. Thanks for reading this far and see you very soon for more adventures.

If you enjoyed this article,

support copyleft and open source

and help us bring our projects to life.

Want to leave a comment?

Create a free account Log in